PhD thesis post mortem

Posted: February 28, 2018

Category:

phd

Hooray! I successfully defended my PhD. The thesis and defense slides are freely available.

It was quite an adventure. Since this thesis sums up the work I've done in the last 3+ years, I thought it'd be interesting to play with (g)it a bit.

I wrote my thesis with org-mode, compiled to LaTeX and then to pdf. I kept all the changes in git from the beginning, which gives a nice opportunity to look back.

So what is your thesis about again?

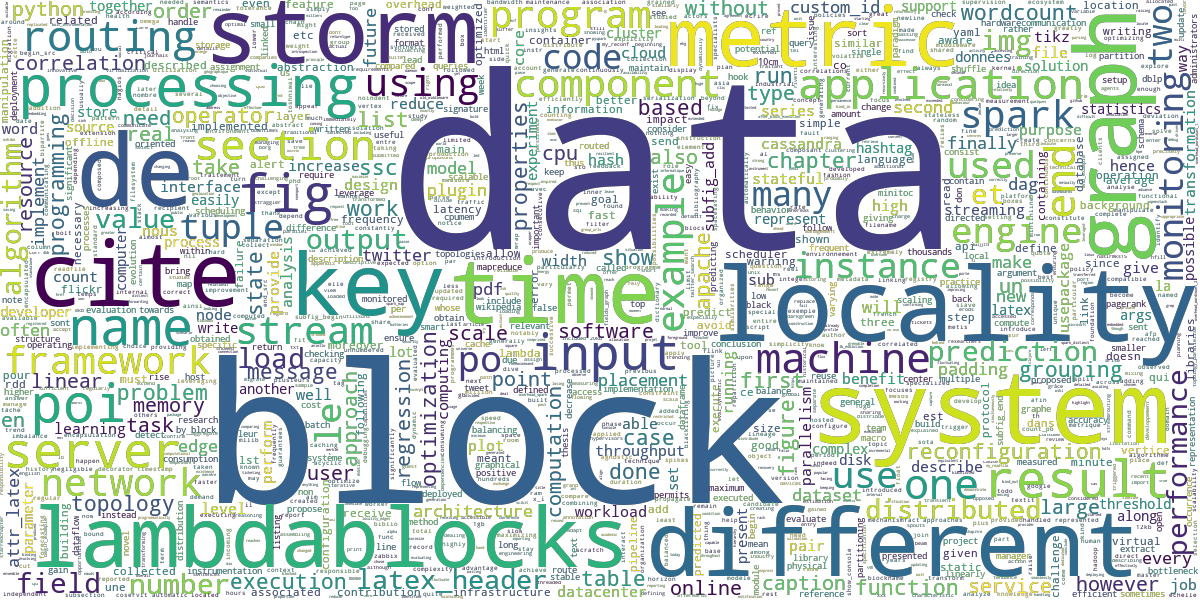

I've heard this question a fair amount of times. Well, let's extract the most common words of the thesis:

grep -oE '\w+' thesis.org | tr '[:upper:]' '[:lower:]' | sort | uniq -c | sort -n > STATS.words

And using the wordcloud library in Python, we can create an image out of this list:

from wordcloud import WordCloud

import matplotlib.pyplot as plt

def text():

with open('STATS.words') as f:

alltext = ''

for line in f:

count, word = line.strip().split(' ')

alltext += int(count) * (word + ' ')

return alltext

def main():

alltext = text()

wordcloud = WordCloud(

width=1200,

height=600,

margin=0,

max_words=100000,

collocations=False,

background_color=None,

mode='RGBA').generate(alltext).to_file('cloud.png')

if __name__ == '__main__':

main()

I'd say it had something to do with data, systems, and blocks.

SLOC and pdf pages

Now, using the git history, let's look at the evolution of the size of the org-mode file and the number of pages in the final pdf:

#!/bin/bash

firstday=0

for day in {300..0..-1}; do

git checkout $(git rev-list -n 1 --before="$day days ago" master)

# org-mode SLOC

lines=$(wc -l thesis.org)

if [ $? -eq 0 ]; then

lines=$(echo $lines | cut -d ' ' -f 1)

echo x | make

if [ $? -eq 0 ]; then

if [ $firstday -eq 0 ]; then

firstday=$day

fi

currentday=$(expr $firstday - $day)

if [ $currentday -ge 100 ]; then

break

fi

# number of pdf pages

pages=$(pdfinfo thesis.pdf | grep 'Pages:' | rev | cut -d ' ' -f 1 | rev)

echo -n $currentday, >> data-days

echo -n $lines, >> data-sloc

echo -n $pages, >> data-pages

fi

fi

done

We use git checkout in a loop, ranging from 300 days ago to

today. This gives us the state of the repository day by day. To avoid

the first days of the repository existence when my thesis didn't

exist, we store in $firstday the day from when thesis.org appeared

(we also use this variable to stop the loop after 100 days — my active

writing time). We then compile the thesis, and if it succeeds we can

store the following values:

- current day (after

$firstday) indata-days(x-axis), - SLOC of

thesis.orgindata-sloc(y-axis), - number of pdf pages after compilation in

data-pages(second y-axis).

Plotting the results:

Expectedly, both metrics are growing together. The peaks are due to the inclusion of the conference papers I wrote during the PhD; and the long straight line at the beginning is due to me working in parallel in other repositories, for chapters included later.

Todo items

With the magics of org-mode, the thesis is also a todo list, all in the same file. To keep track of what I was doing and which chapters needed some love, I used this chain as my todo items states:

TODOSTARTED(working on it)REVIEW(to proofcheck by my advisor)DONE

Let's look at the evolution of the number of items in these 4 states, adding the following in the git loop:

for state in TODO STARTED REVIEW DONE; do

items=$(grep -e "\*\** $state" thesis.org | wc -l)

echo -n $items, >> data-items-$state

done

Most items were only DONE at the end of the writing, when it was

rush time, and it was always hard to decide they didn't need any

refactoring anymore. The number of STARTED items is always low,

which means I didn't work on everything at the same time (I'm pretty

sure there's a catch here, that's not how I remember it). Finally,

there are remaining TODO items at the end, they were things to do

after writing (e.g. thank the future reviewers, publish the final

version, etc).

Lines added and removed

When was I the most productive? We can ask git:

log=$(git log --no-merges --pretty=tformat:'%ad' --date='format:day:%A hour:%H' --numstat thesis.org | paste - - -)

for day in Monday Tuesday Wednesday Thursday Friday Saturday Sunday; do

log_day=$(echo "$log" | grep "day:$day" - | cut -f 3-4 | awk '{added += $1; deleted += $2} END {print added " " deleted}')

echo $day $log_day >> data-commit-days

done

for hour in $(seq 0 23); do

hour=$(printf %02d $hour)

log_hour=$(echo "$log" | grep "hour:$hour" - | cut -f 3-4 | awk '{added += $1; deleted += $2} END {print added " " deleted}')

echo $hour $log_hour >> data-commit-hours

done

This tells us I worked well on Mondays and Tuesdays, and rested well on Saturdays. Also, I didn't work much during the nights.

PDF timelapse

Finally, let's create a timelapse of the generated pdf file, taking inspiration from a colleague. For that purpose, we create an image file containing the thumbnails of the pdf, for every iteration of the git loop:

convert thesis.pdf 'bitmap-%03d.png'

currentday=$(printf %03d $currentday)

montage -tile 18x8 -geometry 200x300 bitmap-*.png allbitmap-$currentday.png

rm bitmap-*.png

We first convert the pdf into a list of png files (one per page) with

convert, before assembling these thumbnails with montage (both

these tools are part of ImageMagick). In order to keep a

numerically-sorted list of files, we pad $currentday with leading

zeros.

Once this is done, we have a list of thumbnails, one for each iteration of the file. But many of them are identical, so we remove duplicates to get a smoother video later:

lastfile=$(ls *.png | head -n 1)

for file in $(ls *.png | tail -n +2); do

echo "Comparing $lastfile and $file"

hexdump $lastfile > 0.dump

hexdump $file > 1.dump

if [ $(diff 0.dump 1.dump | wc -l) -le 6 ]; then

rm $file

else

lastfile=$file

fi

rm 0.dump 1.dump

done

To know if two sequential images are the same, we diff their

hexdumps: quite hackish but it does the job.

Above each picture, we add a caption with the day number:

for filenumber in $(ls *.png | cut -b 11-13); do

file=allbitmap-$filenumber.png

filenumber=$(printf $((10#$filenumber)))

convert $file -background '#990000' -fill white -pointsize 100 label:"Day $filenumber" -splice 0x50 +swap -gravity Center -append $file

done

Finally, we create a video out of the remaining files:

ffmpeg -r 2 -pattern_type glob -i '*.png' -vf scale=1200:-1 bitmap.webm

If you can't play the video, you can get it here.

If you have other interesting thesis statistics ideas, let me know in the comments!